Most AI memory examples focus on recall volume. That is not the hard part. The hard part starts when memory is used in real workflows where bad writes, stale facts, weak retrieval, or context overflow can change behavior silently.

In this issue, we build a local-first memory architecture. The scenario we use is a job search assistant, but the design is reusable for other real-world AI systems. The model helps with semantic representation through embeddings. Deterministic code owns memory safety, retrieval control, context budgeting, and answer boundaries.

What You Are Building

You are building a local-first, production-shaped memory architecture in which memory is treated as a controlled system component, not an unbounded chat history. The application captures short-lived session facts and longer-lived user preferences, combines them with knowledge evidence, and produces grounded responses that stay within explicit runtime limits. The goal is to keep memory useful for personalization and continuity while preserving predictable behavior under real operational conditions.

This is not uncontrolled long-term memory; it is a bounded memory architecture.

System Structure

The architecture is deterministic around retrieval signals:

- Write path: policy + sanitizer gates memory writes before storage.

- Retrieval path: embeddings when available, lexical fallback on failure.

- Assembly path: query + memory + knowledge hits become budgeted context blocks.

- Response path: answer generation is evidence-only and gated by

CanProceed. - Evaluation path: reliability metrics are computed from retrieval and memory state.

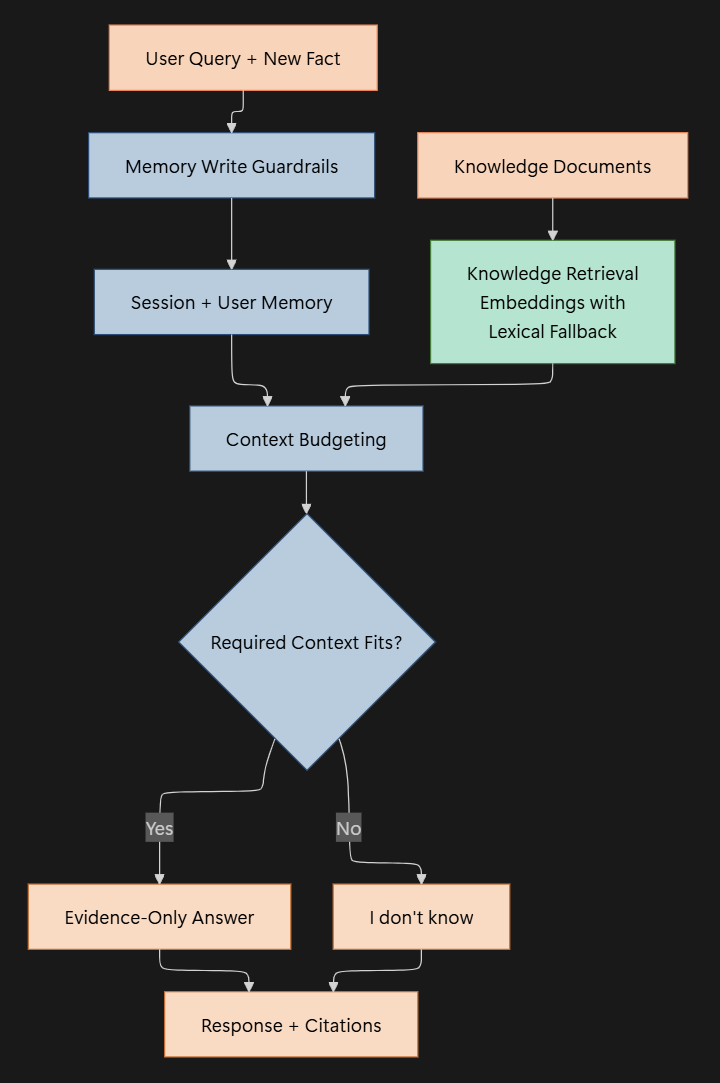

The diagram below shows a high-level control flow of the system:

Runtime Configuration First

Configuration is loaded from file and environment variables before memory operations:

var configuration = new ConfigurationBuilder()

.SetBasePath(AppContext.BaseDirectory)

.AddJsonFile("appsettings.json", optional: true, reloadOnChange: false)

.AddEnvironmentVariables(prefix: "PMA_")

.Build();

var config = AppConfig.Load(configuration);

config.Validate();Context budget is an explicit runtime contract:

public int AvailableContextTokens =>

Math.Max(1, ModelContextWindowTokens - ReservedOutputTokens - FixedPromptTokens);

No valid budget, no run.

Memory Layers with Explicit Lifetime

Session and user memory are independent stores with deterministic IDs and expiry:

public void Add(string sessionId, string key, string value, DateTimeOffset nowUtc, TimeSpan ttl)

{

if (!_entriesBySession.TryGetValue(sessionId, out var list))

{

list = [];

_entriesBySession[sessionId] = list;

}

_sequence++;

list.Add(new MemoryEntry

{

Id = $"SES-{_sequence:0000}",

Scope = MemoryScope.Session,

OwnerId = sessionId,

Key = key.Trim(),

Value = value,

CreatedAtUtc = nowUtc,

ExpiresAtUtc = nowUtc.Add(ttl)

});

}Active facts are filtered and returned in stable order:

public IReadOnlyList<MemoryEntry> GetActive(string sessionId, DateTimeOffset nowUtc)

{

if (!_entriesBySession.TryGetValue(sessionId, out var list))

{

return [];

}

return list

.Where(x => !x.IsExpired(nowUtc))

.OrderByDescending(x => x.CreatedAtUtc)

.ThenBy(x => x.Id, StringComparer.Ordinal)

.ToList();

}This gives predictable recency ordering with deterministic tie-breaks.

Deterministic Write Guardrails

Every memory write passes policy checks first:

public bool TryPrepareForStorage(string rawValue, out string normalizedValue, out string reason)

{

normalizedValue = string.Empty;

reason = string.Empty;

if (string.IsNullOrWhiteSpace(rawValue))

{

reason = "Value is empty.";

return false;

}

if (rawValue.Length > MaxWriteChars)

{

reason = $"Value exceeds max length {MaxWriteChars} chars.";

return false;

}

if (MemoryTextSanitizer.ContainsBlockedSecret(rawValue))

{

reason = "Value looks like a secret and was blocked.";

return false;

}

normalizedValue = MemoryTextSanitizer.Redact(rawValue.Trim());

return true;

}Secret detection and redaction are explicit:

public static bool ContainsBlockedSecret(string value)

{

var text = value.ToLowerInvariant();

if (text.Contains("password is", StringComparison.Ordinal) ||

text.Contains("api key is", StringComparison.Ordinal) ||

text.Contains("secret is", StringComparison.Ordinal))

{

return true;

}

return KeyRegex.IsMatch(value);

}

public static string Redact(string value)

{

var output = EmailRegex.Replace(value, "[REDACTED_EMAIL]");

output = KeyRegex.Replace(output, "[REDACTED_KEY]");

output = LongNumberRegex.Replace(output, "[REDACTED_NUMBER]");

return output;

}Memory writes are never accepted as raw text blindly. This prevents memory from becoming an untrusted sink.

Knowledge Memory with Safe Fallback

Knowledge index build prefers embeddings but degrades safely to lexical retrieval:

public static async Task<KnowledgeMemoryStoreBuildResult> BuildAsync(

IEnumerable<KnowledgeDocument> documents,

IEmbeddingClient embeddingClient,

CancellationToken cancellationToken = default)

{

var ordered = documents

.OrderBy(x => x.Id, StringComparer.Ordinal)

.ToList();

try

{

var withVectors = new List<IndexedKnowledge>(ordered.Count);

foreach (var doc in ordered)

{

var vector = await embeddingClient.EmbedAsync(

$"{doc.Title}\n{doc.Content}",

cancellationToken);

withVectors.Add(new IndexedKnowledge(

doc,

vector,

Tokenize($"{doc.Title} {doc.Content}")));

}

return new KnowledgeMemoryStoreBuildResult(

new KnowledgeMemoryStore(withVectors, embeddingClient),

UsesEmbeddings: true,

Warning: null);

}

catch (Exception ex)

{

var lexicalOnly = ordered

.Select(doc => new IndexedKnowledge(doc, null, Tokenize($"{doc.Title} {doc.Content}")))

.ToList();

return new KnowledgeMemoryStoreBuildResult(

new KnowledgeMemoryStore(lexicalOnly, embeddingClient: null),

UsesEmbeddings: false,

Warning: $"Embedding index unavailable; switched to lexical fallback. {ex.Message}");

}

}Ranking stays deterministic after scoring:

return _index

.Select(entry => new

{

entry.Document,

Score = entry.Vector is null

? 0f

: VectorMath.CosineSimilarity(entry.Vector, queryVector)

})

.Where(hit => hit.Score >= minScore)

.OrderByDescending(hit => hit.Score)

.ThenBy(hit => hit.Document.Id, StringComparer.Ordinal)

.Take(topK)

.Select(hit => new KnowledgeHit(

hit.Document.Id,

hit.Document.Title,

hit.Document.Source,

BuildSnippet(hit.Document.Content, 180),

Math.Round(hit.Score, 3)))

.ToList();Context Budgeting as the Control Plane

The budgeter enforces stable packing order and explicit exclusions:

var orderedCandidates = request.Candidates

.OrderByDescending(static block => block.IsRequired)

.ThenByDescending(static block => block.Priority)

.ThenByDescending(static block => block.ObservedAtUtc)

.ThenBy(static block => block.BlockId, StringComparer.Ordinal);

foreach (var block in orderedCandidates)

{

var tokenCount = EstimateBlockTokens(block);

if (tokenCount <= remainingContextTokens)

{

included.Add(new PackedContextBlock(block, tokenCount));

remainingContextTokens -= tokenCount;

continue;

}

var reason = block.IsRequired

? ExclusionReason.RequiredBlockTooLargeForBudget

: ExclusionReason.ExceedsRemainingBudget;

excluded.Add(new ExcludedContextBlock(block, tokenCount, reason));

}Proceed status is derived from required overflow:

public bool CanProceed =>

Excluded.All(x => x.Reason != ExclusionReason.RequiredBlockTooLargeForBudget);This is the hard control gate for downstream behavior.

Orchestration Across Layers

The orchestrator merges memory layers and computes cost before answer output:

var request = new PromptBudgetRequest(

config.ModelContextWindowTokens,

config.ReservedOutputTokens,

config.FixedPromptTokens,

candidates);

var budgetingResult = contextBudgeter.Pack(request);

var packedPrompt = BuildPackedPrompt(budgetingResult);

var estimatedTokens = config.FixedPromptTokens + budgetingResult.UsedContextTokens + config.ReservedOutputTokens;

var estimatedCostUsd = (estimatedTokens / 1000.0d) * config.EstimatedCostPer1KTokensUsd;This keeps memory assembly and cost estimation explicit and auditable.

Evidence-Only Answer Generation

Answer generation is template-based and refuses to proceed when evidence guarantees are broken:

if (!context.BudgetingResult.CanProceed)

{

return Task.FromResult("I don't know. Required memory context could not be packed within budget.");

}

if (context.KnowledgeHits.Count == 0)

{

return Task.FromResult("I don't know.");

}Actions come from retrieved evidence and include citations:

sb.AppendLine("Recommended actions (verbatim evidence):");

foreach (var hit in knowledgeHits.Take(3))

{

var sentence = PickEvidenceSentence(hit.Snippet);

if (string.IsNullOrWhiteSpace(sentence))

{

continue;

}

sb.AppendLine($"- \"{sentence}\" [{hit.Id}]");

citations.Add(hit.Id);

}The system does not invent unsupported advice when context is weak.

Evaluation Snapshot as a Reliability Gate

Evaluation tracks retrieval quality and operational control signals:

var actualIds = context.KnowledgeHits.Select(x => x.Id).ToList();

recalls.Add(RecallAtK(testCase.ExpectedKnowledgeIds, actualIds));

var totalMemory = sessionMemory.CountTotal() + userMemory.CountTotal();

var staleMemory = sessionMemory.CountStale(nowUtc) + userMemory.CountStale(nowUtc);

var staleRate = totalMemory == 0

? 0

: staleMemory / (double)totalMemory;

var conflictRate = userMemory.GetConflictRate(userId, nowUtc);

var budgetPass = avgLatency <= config.MaxAverageLatencyMs

&& avgCost <= config.MaxAverageCostUsd;This keeps memory quality measurable over time, not assumed.

Why This Architecture Works

The model stays useful, but memory authority remains in deterministic code:

- Memory writes are validated and sanitized before persistence

- Session and user memory lifetimes are explicit and enforceable

- Retrieval supports semantic ranking with deterministic fallback behavior

- Context inclusion is budgeted, inspectable, and reproducible

- Required-memory overflow blocks execution explicitly

- Answers are evidence-only with citation boundaries

- Evaluation includes quality, freshness, conflict, latency, and cost gates

Potential Enhancements

To further improve the system, you can consider:

- Add persistent memory backends while preserving write guardrails and ordering guarantees

- Add memory compaction and summary transforms as explicit, audited jobs

- Add adversarial memory-write tests for prompt injection and sensitive data patterns

- Add regression datasets for stale-memory and conflict-rate drift

- Add reranking or metadata filtering on top of current deterministic tie-breaking

Final Notes

Memory architecture is not about storing more text. It is about controlling what becomes memory, for how long, with what safety checks, under what budget, and with what evidence guarantees.

When memory is typed, guarded, budgeted, and evaluated, it becomes production infrastructure instead of hidden prompt state.

Explore the source code at the GitHub repository.

See you in the next issue.

Stay curious.

Join the Newsletter

Subscribe for AI engineering insights, system design strategies, and workflow tips.